DashboardGenius: Bridging Trust with Transparent AI Reasoning 🧠

We are commonly told that AI is a "black box" that we cannot trust. Especially when it comes to business intelligence, accuracy isn't just a nice-to-have; it's the whole ball game.

We are currently in the midst of a trial with our first enterprise user for DashboardGenius, and one critical piece of feedback became clear: Trust is paramount.

The Challenge: "Is this correct?"

DashboardGenius relies heavily on existing data warehouses. When the AI generates an answer, two things can happen if it looks "wrong":

- Hallucination: The AI made up a fact.

- Revelation: The AI is correct, but the underlying data (or the user's belief about the data) is wrong.

Surprisingly often, it's the latter. The AI reveals faults in existing systems or gaps in data quality. But without transparent reasoning, the user naturally defaults to assuming "the AI is wrong."

The Solution: Transparent Thought Signatures 💭

To bridge gaps in expectations and build trust, we've implemented a feature to expose the AI's "Thought Signature" and function tool usage.

Leveraging features like Gemini's thinking signatures, we now store the exact reasoning steps and database queries the AI performed to arrive at an answer.

See it in Action

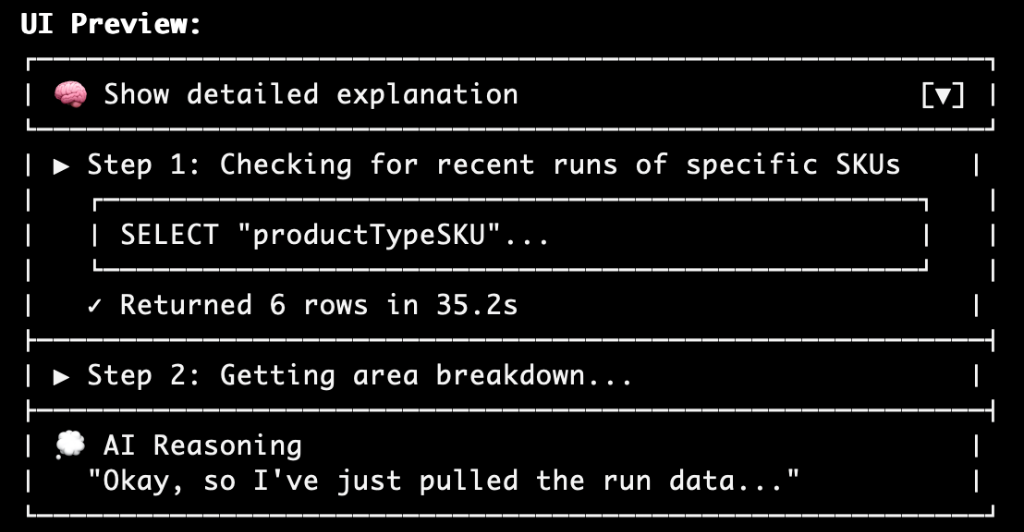

We started with a mockup (courtesy of Claude) and quickly moved to implementation.

The Mockup:

The Deployed Feature:

Now, users can expand a "Show details" section to see the step-by-step logic.

Why this matters

By allowing users to view the explanation and trace the data queries, we empower them to verify the answers. This transparency is the key to comfortable adoption. It turns "blind faith" into "verified trust."

We hope this transparency brings significant operational benefits to our first enterprise customer's factory operations this January! 🏭

More from archive

2026-01-30

DashboardGenius Enterprise Progress & the Birth of CredsGenius 🏥

Our second enterprise trial drives new features for DashboardGenius, and a friend's pain point sparks CredsGenius — an AI-powered credentialing platform for healthcare.

Open2026-01-07

SpamGenius Journey: A Decision for Sustainability 🗓️

Today marks a significant pivot for SpamGenius. To protect our long-term community, we are moving away from free trials and introducing a tiered pricing model.

Open